Occam's Razor in Machine Learning: Why Simpler Systems Win in the Real World

1. When Complexity Fights Back

It started like many machine learning projects do—with ambition and excitement. We were building a time series pipeline to forecast demand across dozens of regions. Multiple models, ensemble layers, dynamic features, custom loss functions—the works.

But when deployment day came, reality hit. The system was fragile, hard to debug, and bloated with components we barely understood. It wasn't smarter—it was just more complicated.

What saved us? Revisiting a centuries-old idea: Occam's Razor.

2. Occam's Razor: A Quick Refresher

Occam's Razor, attributed to 14th-century philosopher William of Ockham, simply states:

"Entities should not be multiplied beyond necessity."

In other words, the simplest explanation—or in our case, solution—is usually the best one.

In machine learning, this isn't just a philosophical ideal. Simpler models often generalize better, are easier to maintain, and are more transparent. Yet in the rush to innovate, we often forget that complexity isn't free.

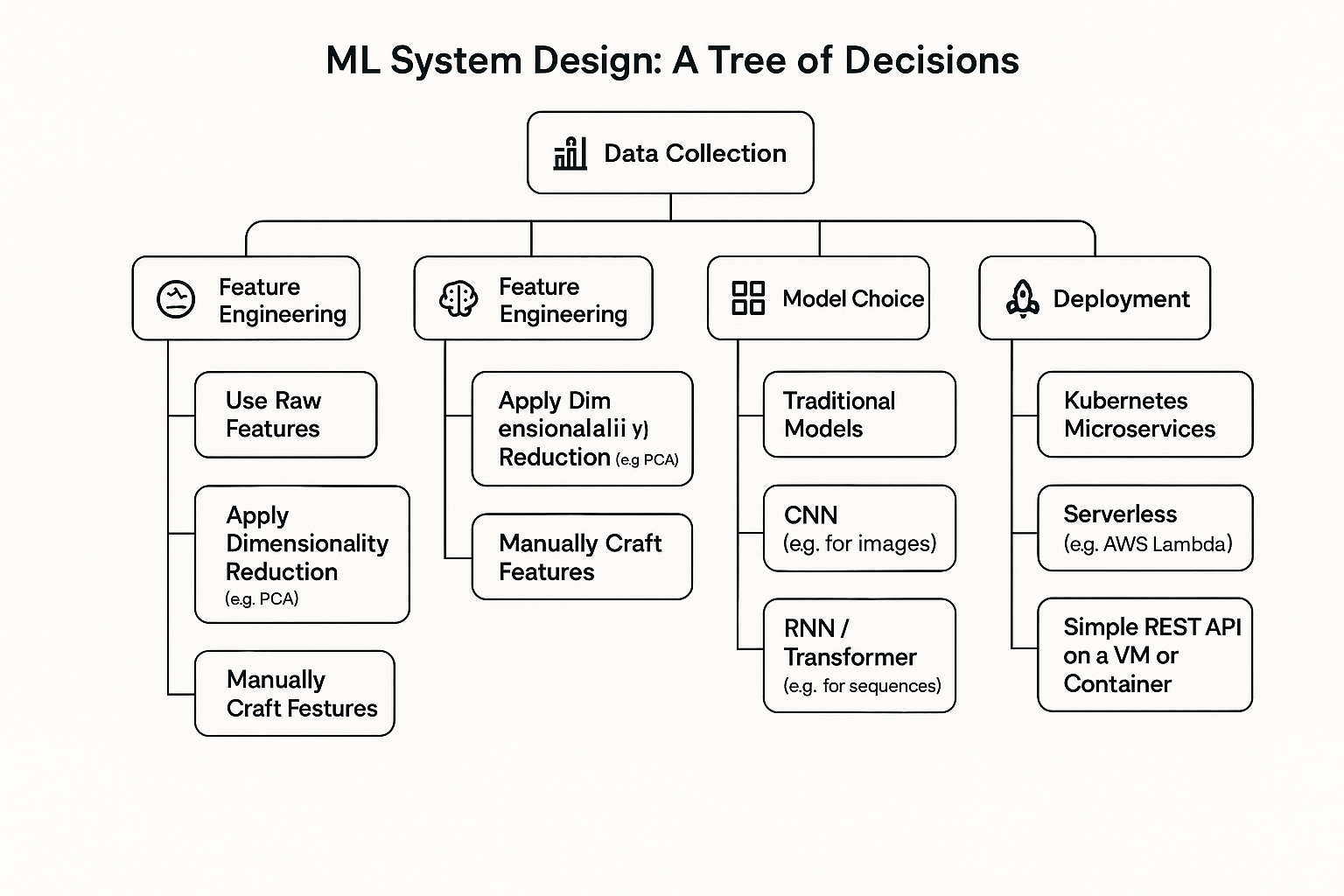

3. The Many Forks in the Road of ML System Design

Designing an ML system is like standing at a decision tree with countless branches:

-

Data collection: Scrape the web, buy third-party data, or stick with what you have?

-

Feature engineering: Use raw signals, apply PCA, or handcraft features?

-

Model choice: Logistic regression? CNN? Transformer-based hybrid?

-

Training strategy: Grid search? Bayesian optimization? Custom schedulers?

-

Deployment: Kubernetes microservices or a simple REST API?

Each decision adds weight. Without a guiding principle, complexity creeps in unnoticed—until it's too late.

4. Occam's Razor in Action: Case Studies

Let's look at real-world domains where simplicity wasn't just better—it was essential.

a. Time Series Forecasting

We once built a demand forecast using an ensemble of LSTMs and XGBoost, with dozens of engineered features.

The simpler version? A univariate ARIMA model with a few calendar features. It performed within 3% of the original and took 1/10th the time to build, deploy, and maintain.

🔪 Razor Rule: Only add complexity after proving the simple version can't cut it.

b. Reinforcement Learning Agents

We tried building an RL agent for resource allocation in a cloud environment. The state space was enormous—think hundreds of dimensions.

Simplifying the state encoding (just CPU, memory, and time-of-day), and reducing reward complexity (binary outcomes) drastically improved training speed and stability.

🔪 Razor Rule: Start with minimal state/action spaces and expand only if the signal is weak.

c. LLMs & Tool-Using Agents

Designing RAG pipelines or GPT-powered agents? It's tempting to chain tools, use advanced embeddings, or engineer multi-step prompts.

But often, a single-pass prompt with a well-scoped context window outperforms multi-hop reasoning. We've seen this especially in customer support bots and document summarization tasks.

🔪 Razor Rule: Don't add tools, memory, or retrieval until simple prompts consistently fail.

5. Why Simpler Often Wins: The Hidden ROI

Beyond performance, simplicity pays off in ways that aren't obvious at first glance:

-

Faster iteration cycles: Less code = fewer bugs = quicker experiments.

-

Easier debugging: Clearer logic paths and smaller search spaces.

-

Lower infra cost: Simpler models often need less compute and memory.

-

Better maintainability: Your future self (or teammate) will thank you.

-

Higher reliability: Fewer moving parts, fewer points of failure.

Complexity is expensive—even when it works.

6. Applying Occam's Razor at Each Stage

Here's how to embed simplicity into each part of your ML lifecycle:

| Stage | Simplicity Strategy |

|---|---|

| Data | Drop features that don't improve baseline. Start with fewer modalities. |

| Features | Use domain-driven features before autoencoders or embeddings. |

| Model | Benchmark a linear model or tree-based baseline first. |

| Training | Avoid complex tuning until you hit a ceiling. |

| Evaluation | Use simple metrics before diving into custom scoring functions. |

| Deployment | Start with monolith deployment before microservices orchestration. |

7. When Not to Oversimplify

Occam's Razor isn't a straitjacket. Some problems are inherently complex.

-

Underfitting: Too simple? You'll miss patterns.

-

Real constraints: Some domains (e.g. robotics, multi-agent RL) need detailed modeling.

-

User needs: Business logic might demand complexity (e.g. explainability, fairness).

Use the razor wisely: as a compass, not a cleaver.

8. Checklist: Your Occam's Audit

Before your next project goes live, ask:

-

Can a baseline model solve this well enough?

-

Have I removed redundant or unused features?

-

Am I over-tuning marginal gains?

-

Do I understand every component in my pipeline?

-

What's the cost of each added complexity?

If you're struggling to justify a piece of your stack, cut it.

9. Closing Thoughts

Occam's Razor isn't about being lazy—it's about being smart.

In a world of infinite model types, data sources, and toolkits, the real skill is knowing what not to use. Simplicity brings clarity. It brings speed. And more often than not—it brings results.

Next time your project spirals, pause and ask:

What would Occam do?

👉 Have your own "simpler solution" story? Share it with me on LinkedIn or X (@DixitKaivalya). I'm building a collection of ML simplification case studies for an upcoming toolkit—stay tuned!